- cross-posted to:

- fuck_ai@lemmy.world

- cross-posted to:

- fuck_ai@lemmy.world

“The real benchmark is: the world growing at 10 percent,” he added. “Suddenly productivity goes up and the economy is growing at a faster rate. When that happens, we’ll be fine as an industry.”

Needless to say, we haven’t seen anything like that yet. OpenAI’s top AI agent — the tech that people like OpenAI CEO Sam Altman say is poised to upend the economy — still moves at a snail’s pace and requires constant supervision.

That is not at all what he said. He said that creating some arbitrary benchmark on the level or quality of the AI, (e.g.: as it’s as smarter than a 5th grader or as intelligent as an adult) is meaningless. That the real measure is if there is value created and out out into the real world. He also mentions that global growth is up by 10%. He doesn’t provide data that correlates the grow with the use of AI and I doubt that such data exists yet. Let’s not just twist what he said to be “Microsoft CEO says AI provides no value” when that is not what he said.

I think that’s pretty clear to people who get past the clickbait. Oddly enough though, if you read through what he actually said, the takeaway is basically a tacit admission, interpreted as him trying to establish a level-set on expectations from AI without directly admitting the strategy of massively investing in LLM’s is going bust and delivering no measurable value, so he can deflect with “BUT HEY CHECK OUT QUANTUM”.

AI is the immigrants of the left.

Of course he didn’t say this. The media want you to think he did.

“They’re taking your jobs”

deleted by creator

microsoft rn:

✋ AI

👉 quantum

can’t wait to have to explain the difference between asymmetric-key and symmetric-key cryptography to my friends!

Forgive my ignorance. Is there no known quantum-safe symmetric-key encryption algorithm?

i’m not an expert by any means, but from what i understand, most symmetric key and hashing cryptography will probably be fine, but asymmetric-key cryptography will be where the problems are. lots of stuff uses asymmetric-key cryptography, like https for example.

Oh that’s not good. i thought TLS was quantum safe already

I’ve been working on an internal project for my job - a quarterly report on the most bleeding edge use cases of AI, and the stuff achieved is genuinely really impressive.

So why is the AI at the top end amazing yet everything we use is a piece of literal shit?

The answer is the chatbot. If you have the technical nous to program machine learning tools it can accomplish truly stunning processes at speeds not seen before.

If you don’t know how to do - for eg - a Fourier transform - you lack the skills to use the tools effectively. That’s no one’s fault, not everyone needs that knowledge, but it does explain the gap between promise and delivery. It can only help you do what you already know how to do faster.

Same for coding, if you understand what your code does, it’s a helpful tool for unsticking part of a problem, it can’t write the whole thing from scratch

Exactly - I find AI tools very useful and they save me quite a bit of time, but they’re still tools. Better at some things than others, but the bottom line is that they’re dependent on the person using them. Plus the more limited the problem scope, the better they can be.

Yes, but the problem is that a lot of these AI tools are very easy to use, but the people using them are often ill-equipped to judge the quality of the result. So you have people who are given a task to do, and they choose an AI tool to do it and then call it done, but the result is bad and they can’t tell.

True, though this applies to most tools, no? For instance, I’m forced to sit through horrible presentations beause someone were given a task to do, they created a Powerpoint (badly) and gave a presentation (badly). I don’t know if this is inherently a problem with AI…

For coding it’s also useful for doing the menial grunt work that’s easy but just takes time.

You’re not going to replace a senior dev with it, of course, but it’s a great tool.

My previous employer was using AI for intelligent document processing, and the results were absolutely amazing. They did sink a few million dollars into getting the LLM fine tuned properly, though.

LLMs could be useful for translation between programming languages. I asked it to recently for server code given a client code in a different language and the LLM generated code was spot on!

I remain skeptical of using solely LLMs for this, but it might be relevant: DARPA is looking into their usage for C to Rust translation. See the TRACTOR program.

So why is the AI at the top end amazing yet everything we use is a piece of literal shit?

Just that you call an LLM “AI” shows how unqualified you are to comment on the “successes”.

What are you talking about? I read the papers published in mathematical and scientific journals and summarize the results in a newsletter. As long as you know equivalent undergrad statistics, calculus and algebra anyone can read them, you don’t need a qualification, you could just Google each term you’re unfamiliar with.

While I understand your objection to the nomenclature, in this particular context all major AI-production houses including those only using them as internal tools to achieve other outcomes (e.g. NVIDIA) count LLMs as part of their AI collateral.

The mechanism of machine learning based on training data as used by LLMs is at its core statistics without contextual understanding, the output is therefore only statistically predictable but not reliable. Labeling this as “AI” is misleading at best, directly undermining democracy and freedom in practice, because the impressively intelligent looking output leads naive people to believe the software knows what it is talking about.

People who condone the use of the term “AI” for this kind of statistical approach are naive at best, snake oil vendors or straightout enemies of humanity.

Can you name a company who has produced an LLM that doesn’t refer to it generally as part of “AI”?

can you name a company who produces AI tools that doesn’t have an LLM as part of its “AI” suite of tools?

How do those examples not fall into the category “snake oil vendor”?

what would they have to produce to not be snake oil?

Wrong question. “What would they have to market it as?” -> LLMs / machine learning / pattern recognition

Not this again… LLM is a subset of ML which is a subset of AI.

AI is very very broad and all of ML fits into it.

This is the issue with current public discourse though. AI has become shorthand for the current GenAI hypecycle, meaning for many AI has become a subset of ML.

Correction, LLMs being used to automate shit doesn’t generate any value. The underlying AI technology is generating tons of value.

AlphaFold 2 has advanced biochemistry research in protein folding by multiple decades in just a couple years, taking us from 150,000 known protein structures to 200 Million in a year.

I think you’re confused, when you say “value”, you seem to mean progressing humanity forward. This is fundamentally flawed, you see, “value” actually refers to yacht money for billionaires. I can see why you would be confused.

Well sure, but you’re forgetting that the federal government has pulled the rug out from under health research and therefore had made it so there is no economic value in biochemistry.

Thanks. So the underlying architecture that powers LLMs has application in things besides language generation like protein folding and DNA sequencing.

alphafold is not an LLM, so no, not really

You are correct that AlphaFold is not an LLM, but they are both possible because of the same breakthrough in deep learning, the transformer and so do share similar architecture components.

A Large Language Model is a translator basically, all it did was bridge the gap between us speaking normally and a computer understanding what we are saying.

The actual decisions all these “AI” programs do are Machine Learning algorithms, and these algorithms have not fundamentally changed since we created them and started tweaking them in the 90s.

AI is basically a marketing term that companies jumped on to generate hype because they made it so the ML programs could talk to you, but they’re not actually intelligent in the same sense people are, at least by the definitions set by computer scientists.

What algorithm are you referring to?

The fundamental idea to use matrix multiplication plus a non linear function, the idea of deep learning i.e. back propagating derivatives and the idea of gradient descent in general, may not have changed but the actual algorithms sure have.

For example, the transformer architecture (that is utilized by most modern models) based on multi headed self attention, optimizers like adamw, the whole idea of diffusion for image generation are I would say quite disruptive.

Another point is that generative ai was always belittled in the research community, until like 2015 (subjective feeling would need meta study to confirm). The focus was mostly on classification something not much talked about today in comparison.

Wow i didn’t expect this to upset people.

When I say it hasn’t fundamentally changed from an AI perspective i mean there is no intelligence in artificial Intelligence.

There is no true understanding of self, just what we expect to hear. There is no problem solving, the step by steps the newer bots put out are still just ripped from internet search results. There is no autonomous behavior.

AI does not meet the definitions of AI, and no amount of long winded explanations of fundamentally the same approach will change that, and neither will spam downvotes.

Btw I didn’t down vote you.

Your reply begs the question which definition of AI you are using.

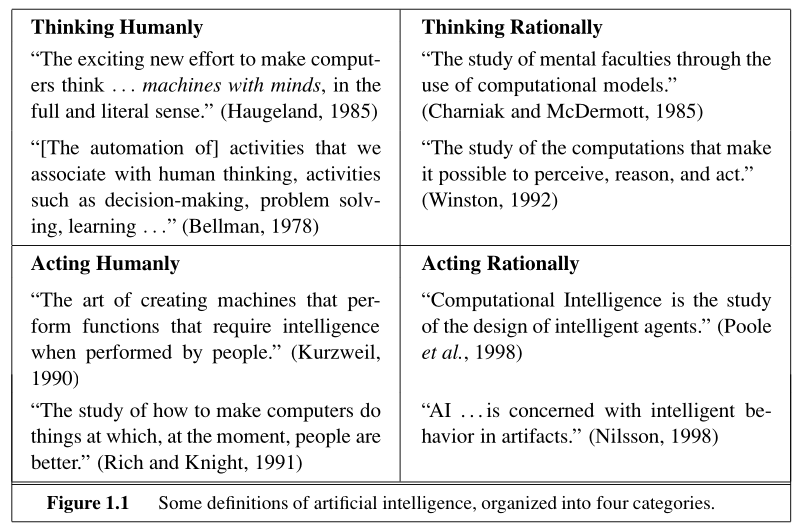

The above is from Russells and Norvigs “Artificial Intelligence: A Modern Approach” 3rd edition.

I would argue that from these 8 definitions 6 apply to modern deep learning stuff. Only the category titled “Thinking Humanly” would agree with you but I personally think that these seem to be self defeating, i.e. defining AI in a way that is so dependent on humans that a machine never could have AI, which would make the word meaningless.

I’m just sick of marketing teams calling everything AI and ruining what used to be a clear goal by getting people to move the bar and compromise on what used to be rigid definitions.

I studied AI in school and am interested in it as a hobby, but these machine aren’t at the point of intelligence, despite us making them feel real.

I base my personal evaluations comparing it to an autonomous being with all the attributes I described above.

ChatGPT, and other chatbots, knows what it is because it searches the web for itself, and in fact it was programmed to repeat canned responses about itself when asked because it was saying crazy shit it was finding on the internet before.

Sam Altman and many other big names in tech have admitted that we have pretty much reached the limits of what current ML models can acheive, and we basically have to reinvent a new and more efficient method of ML to keep going.

If we were to go off Alan Turing’s last definition then many would argue even ChatGPT meets those definitions, but even he increased and refined his definition of AI over the years before he died.

Personally I don’t think we’re there yet, and by the definitons I was taught back before AI could be whatever people called it we aren’t there either. I’m trying to find who specifically made the checklist for intelligencei remember, if I do I will post it here.

That’s because they want to use AI in a server scenario where clients login. That translated to American English and spoken with honesty means that they are spying on you. Anything you do on your computer is subject to automatic spying. Like you could be totally under the radar, but as soon as you say the magic words together bam!..I’d love a sling thong for my wife…bam! Here’s 20 ads, just click to purchase since they already stole your wife’s boob size and body measurements and preferred lingerie styles. And if you’re on McMaster… Hmm I need a 1/2 pipe and a cap…Better get two caps in case you cross thread on…ding dong! FBI! We know you’re in there! Come out with your hands up!

The only thing stopping me from switching to Linux is some college software (Won’t need it when I’m done) and 1 game (which no longer gets updates and thus is on the path to a slow sad demise)

So I’m on the verge of going Penguin.

What software / game is that? it could still run in Wine or Bottle.

You’re really forcing it at that point. Wine can’t run most of what I need to use for work. I’m excited for the day I can ditch Windows, but it’s not any time soon unfortunately. I’ll have to live with WSL.

But… i am still curious… what are you trying to run 😆

Not the same person with the program. Just another person making an excuse.

Yeah use Windows in a VM and your game probably just works too, I was surprised that all games I have on Steam now just work on Linux.

Years ago when I switched from OSX to Linux I just stopped gaming because of that but I started testing my old games and suddenly no problems with them anymore.

LLMs in non-specialized application areas basically reproduce search. In specialized fields, most do the work that automation, data analytics, pattern recognition, purpose built algorithms and brute force did before. And yet the companies charge nx the amount for what is essentially these very conventional approaches, plus statistics. Not surprising at all. Just in awe of how come the parallels to snake oil weren’t immediately obvious.

And crashing the markets in the process… At the same time they came out with a bunch of mambo jumbo and scifi babble about having a million qbit quantum chip… 😂

Very bold move, in a tech climate in which CEOs declare generative AI to be the answer to everything, and in which shareholders expect line to go up faster…

I half expect to next read an article about his ouster.

My theory is it’s only a matter of time until the firing sprees generate enough backlog of actual work that isn’t being realised by the minor productivity gains from AI until the investors start asking hard questions.

Maybe this is the start of the bubble bursting.

R&D is always a money sink

Especially when the product is garbage lmao

It isn’t R&D anymore if you’re actively marketing it.

Uh… Used to be, and should be. But the entire industry has embraced treating production as test now. We sell alpha release games as mainstream releases. Microsoft fired QC long ago. They push out world breaking updates every other month.

And people have forked over their money with smiles.

Microsoft fired QC long ago.

I can’t wait until my cousin learns about this, he’ll be so surprised.

I’d tell him but he’s at work. At Microsoft, in quality control.

Make sure to also tell him he’s doing a shit job!

He’s probably been fired long ago, but due to non-existant QC, he was never notified.

AI is burning a shit ton of energy and researchers’ time though!

That’s not the worst. It is burning billions for the companies with no signs of them ever becoming close to profitable.

You say this like it’s a bad thing?

eh, the entireity of training GPT4 and the whole world using it for a year turns out to be about 1% of the gasoline burnt just by the USA every single day. Its barely a rounding error when it comes to energy usage.

That’s standard for emerging technologies. They tend to be loss leaders for quite a long period in the early years.

It’s really weird that so many people gravitate to anything even remotely critical of AI, regardless of context or even accuracy. I don’t really understand the aggressive need for so many people to see it fail.

For me personally, it’s because it’s been so aggressively shoved in my face in every context. I never asked for it, and I can’t escape it. It actively gets in my way at work (github copilot) and has already re-enabled itself at least once. I’d be much happier to just let it exist if it would do the same for me.

I just can’t see AI tools like ChatGPT ever being profitable. It’s a neat little thing that has flaws but generally works well, but I’m just putzing around in the free version. There’s no dollar amount that could be ascribed to the service that it provides that I would be willing to pay, and I think OpenAI has their sights set way too high with the talk of $200/month subscriptions for their top of the line product.

This summarizes it well: https://www.wheresyoured.at/wheres-the-money/

Wouldn’t call that a “summary”, but interesting read all the same. Thanks for the link.

It is fun to generate some stupid images a few times, but you can’t trust that “AI” crap with anything serious.

I was just talking about this with someone the other day. While it’s truly remarkable what AI can do, its margin for error is just too big for most if not all of the use cases companies want to use it for.

For example, I use the Hoarder app which is a site bookmarking program, and when I save any given site, it feeds the text into a local Ollama model which summarizes it, conjures up some tags, and applies the tags to it. This is useful for me, and if it generates a few extra tags that aren’t useful, it doesn’t really disrupt my workflow at all. So this is a net benefit for me, but this use case will not be earning these corps any amount of profit.

On the other end, you have Googles Gemini that now gives you an AI generated answer to your queries. The point of this is to aggregate data from several sources within the search results and return it to you, saving you the time of having to look through several search results yourself. And like 90% of the time it actually does a great job. The problem with this is the goal, which is to save you from having to check individual sources, and its reliability rate. If I google 100 things and Gemini correctly answers 99 of those things accurate abut completely hallucinates the 100th, then that means that all 100 times I have to check its sources and verify that what it said was correct. Which means I’m now back to just… you know… looking through the search results one by one like I would have anyway without the AI.

So while AI is far from useless, it can’t now and never will be able to be relied on for anything important, and that’s where the money to be made is.

Even your manual search results may have you find incorrect sources, selection bias for what you want to see, heck even AI generated slop, so the AI generated results will just be another layer on top. Link aggregating search engines are slowly becoming useless at this rate.

While that’s true, the thing that stuck out to me is not even that the AI was mislead by itself finding AI slop, or even somebody falsely asserting something. I googled something with a particular yea or no answer. “Does X technology use Y protocol”. The AI came back with “Yes it does, and here’s how it uses it”, and upon visiting the reference page for that answer, it was documentation for that technology where it explained very clearly that x technology does NOT use Y protocol, and then went into detail on why it doesn’t. So even when everything lines up and the answer is clear and unambiguous, the AI can give you an entirely fabricated answer.